ForeHumanity

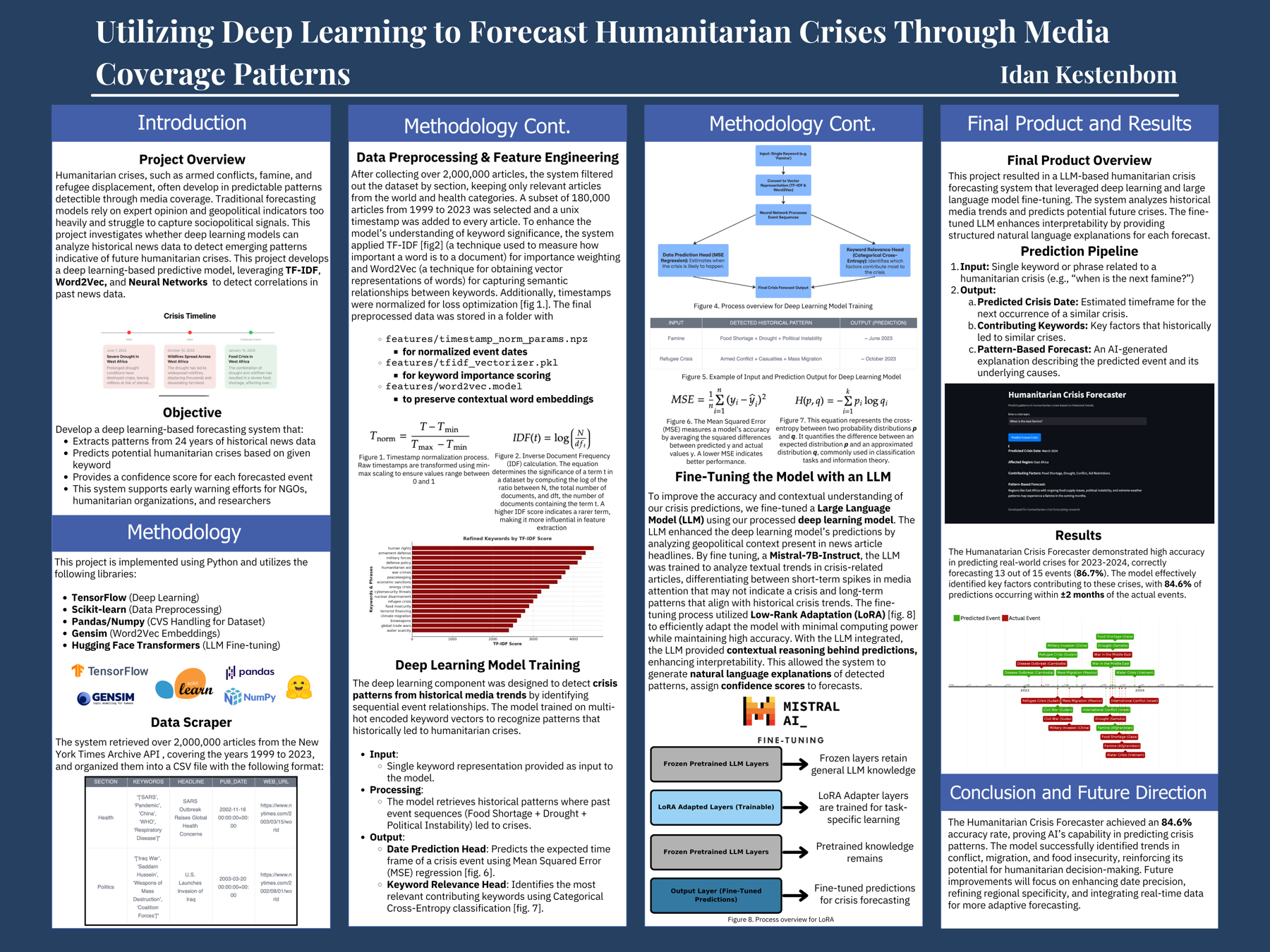

A deep learning system that forecasts where the next humanitarian crisis is likely to break out, trained on roughly 2 million New York Times articles spanning 24 years. A LoRA-fine-tuned Mistral 7B sits on top of the classifier and explains each prediction in natural language.

Crises don't appear. They surface.

Famines, conflicts, and refugee surges are usually visible in the news weeks or months before relief organizations mobilize. The pattern is in the coverage. The hard part is reading it at scale.

Existing crisis-response systems are reactive. By the time UNHCR, WHO, or a national government acts, the crisis has already cost lives. Forecasting tools exist (FEWS NET, ACAPS) but rely on hand-curated indicators and operate on monthly cadences.

ForeHumanity asks a simpler question: can a model trained on the language of crisis reporting predict where the next one will surface? If the answer is yes, even by a few weeks, the lead time is enough to pre-position aid.

2 million articles, 24 years, structured for learning.

Every article from the NYT archive between 2000 and 2024, parsed for keywords, section, and timestamp. Multi-hot encoded. Time normalized. Used as supervised signal against a labeled crisis ledger.

Two models. One pipeline.

A TensorFlow/Keras classifier predicts when the next crisis tied to a keyword will surface. A LoRA-fine-tuned Mistral 7B explains why.

The forecaster (Keras)

Dense network over multi-hot keyword vectors plus section embeddings. Output is a normalized timestamp in [0, 1] that gets denormalized back into a real date. Trained with MSE loss on labeled crisis events.

The explainer (Mistral 7B + LoRA)

Mistral 7B base, LoRA-fine-tuned on a curated dataset of crisis explainers. Adapters live in lora_mistral_ckpt/. Runs on CPU with the peft + transformers stack. Generates a natural-language rationale for each prediction.

# app.py · Streamlit forecasting interface

from transformers import AutoTokenizer, AutoModelForCausalLM

from data_preprocessing import (load_and_clean_data, parse_keywords,

normalize_timestamp, create_multi_hot_vectors)

@st.cache_resource

def load_lora_model(lora_path="./lora_mistral_ckpt"):

tokenizer = AutoTokenizer.from_pretrained(lora_path)

model = AutoModelForCausalLM.from_pretrained(lora_path).to("cpu")

return tokenizer, model

def denormalize_timestamp(norm_val, min_ts, max_ts):

pred_ts = norm_val * (max_ts - min_ts) + min_ts

return datetime.datetime.utcfromtimestamp(pred_ts)

Predictions you can interrogate.

A bare classifier output (a date) is not a useful product for a humanitarian operator. ForeHumanity wraps each prediction in a generated rationale that names the keywords driving the forecast and the historical patterns the model is matching against.

The Mistral adapter was trained on prompt-completion pairs derived from the labeled crisis ledger plus surrounding NYT context. The result: when a user enters famine, the system both predicts a date and writes a paragraph explaining which historical episodes the prediction resembles and what early-warning indicators are most active.

The choice of LoRA over full fine-tuning was deliberate. The adapters are 65 MB versus Mistral's 14 GB, training ran on a single consumer GPU, and the explainer can be retrained as new crisis data lands without retraining the whole base model.

Recognized for impact.

Held-out 20% of crisis ledger events not seen during training.